ISO 42001: AI Governance & Compliance Guide

Artificial intelligence is transforming how organizations operate, but it also introduces risks such as bias, limited transparency, and increasing regulatory scrutiny.

To address these challenges, organizations require a structured and auditable approach to AI governance. ISO 42001 is an international standard that specifies requirements for establishing and maintaining an Artificial Intelligence Management System (AIMS).

Adopting this framework can support organizations in managing AI risks and strengthening governance practices.

In this article, I will explain what ISO 42001 is, along with its key requirements, comparisons, and implementation considerations.

What is ISO 42001?

ISO/IEC 42001 is a certifiable international standard that defines requirements for establishing, implementing, maintaining, and improving an Artificial Intelligence Management System (AIMS). It provides companies with a risk-based framework to govern AI systems responsibly, transparently, and safely throughout their lifecycle.

This standard helps companies manage the risks of AI, such as bias, unexplainable AI, and unclear operational purposes, and overcome challenges while remaining consistent with the company’s goals.

ISO 42001 is a broad standard. ISO 42001 is meant for all types of companies, big or small, that work on creating AI systems, providing AI services, or using AI services from other companies.

Because ISO 42001 is certifiable, companies may be independently assessed by an accredited certification body for conformity with the standard. Certification indicates that the organization’s AIMS has been evaluated against ISO 42001 requirements within the scope of the audit.

A risk framework forms the basis of the standard. As a result, companies are expected to establish and implement measures that are aligned with the level of risk and the corresponding effects of the company’s AI systems.

Key Components of ISO 42001

To make implementation practical, the standard breaks down AI governance into several core areas. Here is a look at the foundational components you will need to establish:

3.1 Leadership & Governance

By developing an AI policy that aligns with organizational objectives, top management should demonstrate leadership and commitment to the AI management system. This includes articulating the organizational strategy and delegating, assigning, and driving advocacy on specific roles, tasks, and accountability in the AI governance process.

3.2 Risk Management & Planning

Companies should be able to identify, analyze, and manage risks associated with AI systems. This ranges from the ethical risks, such as unfair bias and lack of transparency, to operational risks, such as compromised data, failures in the model, and security threats. The measures to mitigate the risk should align with the level of risk and its impact.

3.3 Support & Resources

Companies must implement the AIMS effectively. There should be adequate support, for example, skilled personnel, training, and documentation. Staff should be trained on AI governance, and there should be open communication regarding the policy on AIMS.

3.4 AI Operations

This specific section looks at the more practical day-to-day management and execution of the life cycle of AI systems. This involves developing and implementing clear and repeatable procedures for the governance of data and the lifecycle of models (i.e., building, testing, validating, deploying, and monitoring). Controls are intended to support consistency, traceability, and reliability across AI operations.

3.5 Performance Evaluation

Companies are expected to track, measure, and assess the results of the AI management system. This is done through internal audits, system reviews, and management reviews to ensure that the controls in place are relevant and effective.

3.6 Continuous Improvement

AI governance practices, according to ISO 42001, require constant improvement. This can be done through establishing and implementing preventive and corrective actions, adjusting to refine controls to respond to current and expected changes, and improving the processes regarding the risks and changes present in AI systems.

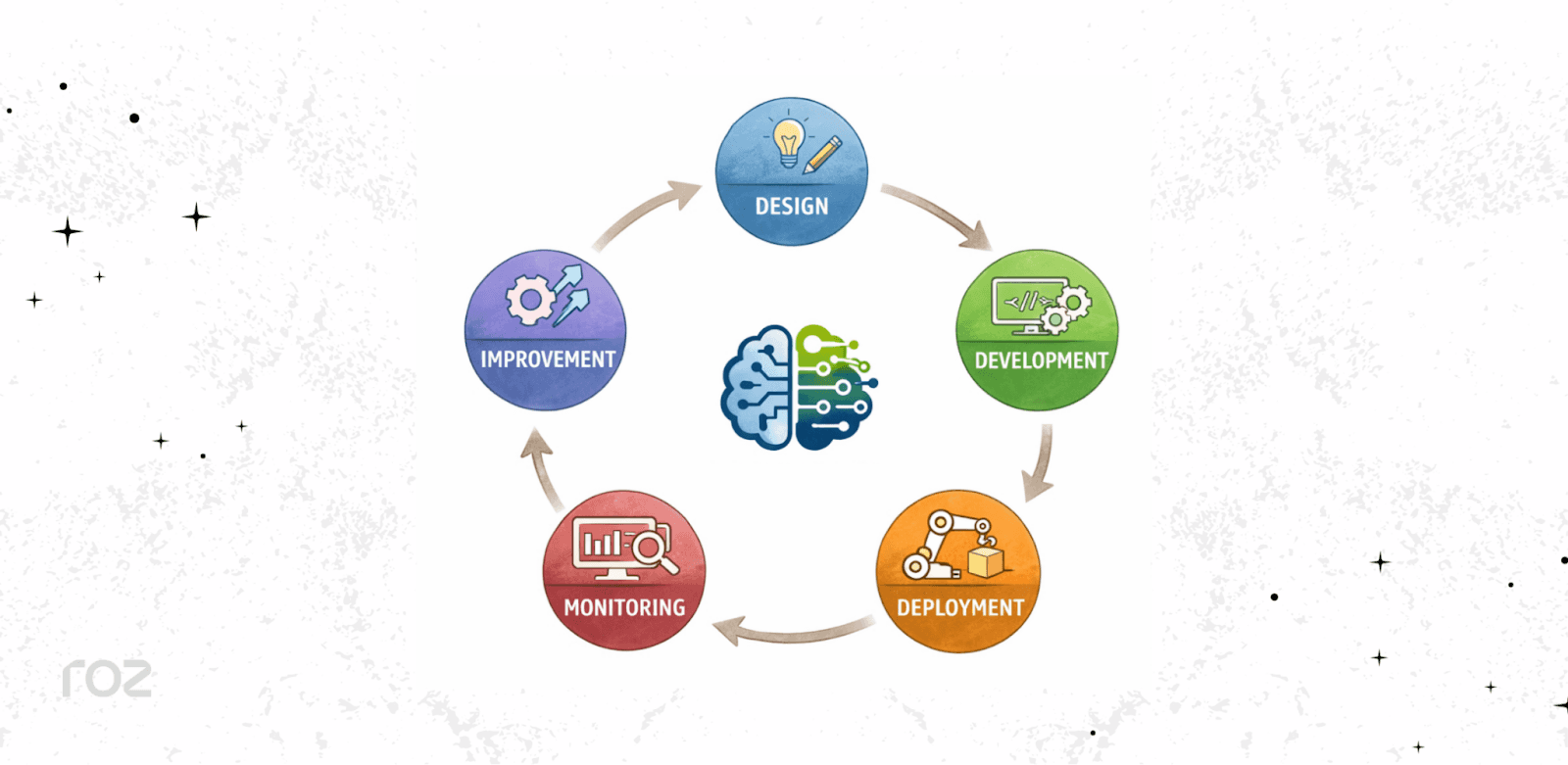

The ISO 42001 AI Management System

Artificial Intelligence Management Systems (AIMS) are made up of a combination of procedures, processes, and policies to create a framework for the handling and management of company-defined policies surrounding the use of AI systems within the company.

AIMS, under the ISO 42001 standard, offers documented and consistent methods around identification and management of risks associated with using AI technology and systems within a company.

A fundamental tenet of ISO 42001 is the lifecycle approach to the governance of AI. This implies that every phase of an AI system's operations receives consistent governance application.

A typical AI lifecycle within an AIMS image is below:

Along with these phases, a well-designed AIMS is built with a governance framework for the structure associated with responsibilities, roles, and accountability for AI.

A good AIMS includes well-defined and documented processes to support governance and audit preparedness; comprehensive processes to support consistency and completeness in the analysis of the operational, ethical, and regulatory risks associated with AI systems; and processes to support appropriate risk control measures.

ISO 42001 Controls (Annex A)

Annex A of ISO 42001 provides a comprehensive set of control objectives and controls that are meant to aid in the implementation of an AIMS. The planning and risk assessment phase resolves the risks through the control objectives.

The controls are intended to be applied flexibly in accordance with the organization’s unique context, use cases for AI, and risk assessment.

The implementation of these controls helps operationalize AI governance principles. There are several operationalized practical elements. Some of the more notable control areas are

AI governance policies: Creating documented policies and procedures that articulate how the company’s AI systems are built, operated, and utilized.

Data quality and integrity: Ensuring AI systems are trained and operated on data that is accurate, relevant, and collected in compliance with the law.

Bias detection and mitigation: There are systems in place to detect, evaluate, and minimize the risk of biased or discriminatory outcomes of AI systems.

Transparency and explainability: The AI systems employed are governed by well-documented control systems and legal frameworks that are meant to aid in understanding how the systems operate and how they come to their decisions.

Human supervision: Make certain that there is a clear definition, understanding, and application of when and how to engage to assess the output of AI and to act in the event of unexpected or high-risk outcomes.

Security and resilience: Safeguarding AI systems against threats, including adversarial manipulation, and ensuring that they are trustworthy and consistent regardless of circumstance.

Who Should Implement ISO 42001?

Organizations across industries that use AI systems may consider implementing ISO 42001. It specifically pertains to any organization that builds, employs, or integrates AI systems into the framework of its operations.

Typically, the following companies apply for ISO 42001:

Companies require the establishment of governance throughout the entire AI lifecycle, whether it involves proprietary AI systems or foundational models.

Companies that incorporate AI systems, particularly where the AI endorses internal decision-making or operational activities.

Software as a Service (SaaS) providers and other tech companies that use AI in the tools/products and services they offer to the public.

Entities in the controlled and formally established sectors such as finance, healthcare, and insurance, where there is a strong need for accountability, clarity, and the management of risks.

Primarily, any company that creates, implements, or uses AI systems and wants to have in place structured governance, risk management, and proper AI practices management within the frameworks of ISO 42001.

The ISO 42001 Audit & Certification Process

Certification may demonstrate that an organization’s AI management system has been assessed against the standard with international market expectations. The process starts with,

7.1 Gap Assessment

Each company starts with a gap analysis to evaluate their current AI governance practices and compare them with the expectations of ISO 42001. This is helpful in finding gaps that exist in the controls, documentation, and procedures. This gap analysis helps set the scope for further actions and prioritize the ones that are most important.

7.2 Implementation

After the gap analysis, each company formulates their actions that need to be performed and sets their AIMS. This is done by establishing AI policies, executing the AI controls that are relevant, and creating the documentation that is needed to support the governance policies.

7.3 Internal Audit

Organizations conduct internal audits to verify the complete implementation of the AIMS. This analysis will show nonconformities and help identify if there are any further refinements.

7.4 Certification Audit

An external body that has been accredited performs the certification audit, which has two common stages:

1st Stage (Readiness Review): The external auditor will analyze your documentation, scope, and how ready you are for the audit. This is like a dress rehearsal to see if your organization is ready.

Stage 2 (Certification Audit): The auditor looks at your AIMS and evaluates whether it has been effectively implemented and the controls are functioning as intended.

Before any certification can be given, you'll need to respond to any issues the auditor uncovers (nonconformities).

7.5 Continuous Monitoring

Maintaining your AIMS is a requirement for ISO 42001 certification. Your company will need to conduct ongoing surveillance, periodic internal audits, and management reviews. Certification bodies will conduct surveillance audits in the interim, prior to recertification audits.

ISO 42001 vs Other AI Regulations

The global landscape for AI governance is changing quickly. Frameworks such as the NIST AI Risk Management Framework and the OECD AI Principles provide guidance, while regulations such as the EU AI Act establish legal requirements, which entered into force in 2024 with phased implementation timelines and a regulation-based approach to managing risk.

ISO 42001 may align with some of the same governance goals as emerging AI regulations, but it serves a different purpose. It is a management-system standard for structuring internal AI governance, whereas regulations impose legal obligations.

ISO 42001 can help the company in implementing AI within the regulation; however, certification does not relate to any specific law.

Area | ISO 42001 | AI Regulations (e.g., EU AI Act) |

Nature | Voluntary standard | Legal requirement |

Focus | Governance framework | Compliance obligations |

Scope | Global applicable | Region-specific |

Common Challenges in Implementing ISO 42001

Implementing an AIMS can present challenges for companies that are embracing changes in AI technology. Here are the challenges:

Limited visibility into AI systems: Most companies don't have a system for tracking all of the AI use cases. This includes the case of ‘shadow AI,' where employees use third-party AI without the company’s knowledge.

Data quality and governance gaps: For older, decentralized data sets, it is especially challenging to document data lineage and controls regarding user consent and data quality.

Lack of standardized processes: Differences between engineering, governance, and compliance may result in a lack of uniformity in the development and operation of AI systems.

Rapid evolution of AI models: AI systems must be monitored, and adjustments must be made to the risk assessment as a result of retraining or modifying the system.

Documentation complexity: Resources are consumed to prove, document, and audit evidence in the AI lifecycle.

How Roz Supports ISO 42001 Engagements

For CPA firms and advisory teams supporting ISO 42001 readiness, managing documentation and evidence can be complex. Our tool helps streamline this process through structured, AI-assisted audit delivery.

Roz supports teams by:

Organizing documentation and evidence in structured workspaces for each client.

Extracting controls and mapping them to specific ISO 42001 requirements.

Answering questionnaires by referencing source-linked documentation.

Generating draft workpapers that include audit trails and full traceability.

Identifying documentation gaps with a first-pass analysis.

By structuring workflows and reducing manual effort, our tool helps teams deliver ISO 42001 engagements more efficiently and consistently.

Conclusion

It focuses on the biggest concerns, like the ethical, the legal, the risks to the reputation of the company, and more. As the legal systems governing AI evolve and new legal frameworks emerge, ISO 42001 is considered an excellent system to adopt.

AI governing is a process that never ends. It presents both opportunities and challenges. Organizations that establish governance early are better positioned to manage complexity as AI systems evolve.

I hope this article has helped you better understand ISO 42001 and AI governance.